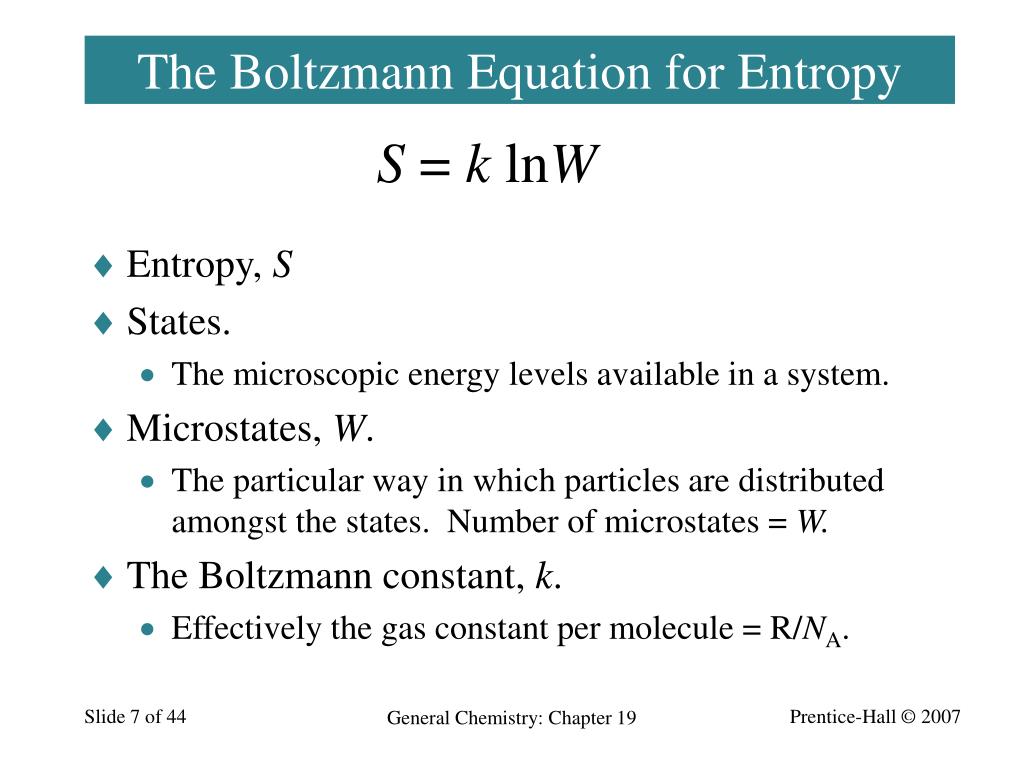

For a blackbody with uniform internal temperature that is emitting heat to a much colder surroundings: (2.21) where SB is the Stefan-Boltzmann constant (5.670×10 8 W m 2 K. Let us start by considering some general statistical ensemble such that a state has energy and probability which is the same for all states with the same energy. The Stefan-Boltzmann formula for emission of heat from a hot body into space (Hofmeister (2019), Chapter 8) provides an important way to recast Fourier’s laws. Of course this does not mean that we understand, whatever is meant by that loaded word 'understand', what time is. This derivation is not new of course, but I decided to write it up anyway because it is a particularly elegant argument and is not always given emphasis in the usual textbooks. 5 Boltzmann's entropy 6 Initial conditions 7 References 8 Recommended reading 9 See also What is time Time is arguably among the most primitive concepts we havethere can be no action or movement, no memory or thought, except in time. Where is the effective number of configurations of an isolated system with total energy. Common values of b are 2, Euler's number e, and 10, and the unit of entropy is shannon (or bit) for b 2, nat. In short, the Boltzmann formula shows the relationship between entropy and the number of ways the atoms or molecules of a certain kind of thermodynamic. Shannon in 1948 is of the form: where is the probability of the message taken from the message space M, and b is the base of the logarithm used. Our aim is to discuss and compare these two. The defining expression for entropy in the theory of information established by Claude E. Here I document a relatively straightforward derivation of the formula which might be the easiest route to develop intuition for undergraduate students who already know the formula for the Boltzmann entropy, In contrast, the Boltzmann entropy is a function on phase space, and is thus defined for an individual system. Which is actually identical, except for units, to the Shannon entropy formula.

Maximum Entropy Predicts Flat Distributions. There are many ways to arrive at the Gibbs entropy formula This distribution is predicted by the maximum-entropy principle. Boltzmanns macroscopic formulation leads naturally to a formula for the entropy of dilute gases which may be far from LTE.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed